An AI PoC helps validate feasibility, data readiness, and expected impact before scaling investment. It enables evidence-based decisions early in the AI lifecycle, reducing risk and avoiding costly misalignment later.

What is an AI proof of concept

An AI proof of concept (PoC) is a focused, time-boxed initiative designed to validate whether a specific artificial intelligence idea is technically feasible and practically useful within a given business context. Its purpose is not to deliver a production-ready system, but to generate evidence that an AI approach can work with real data, under real constraints, and against clearly defined success criteria.

Unlike conceptual discussions or high-level strategy documents, an AI PoC operates on tangible inputs and outputs. It typically involves selecting a narrow use case, preparing a representative data sample, building a minimal AI model or workflow, and evaluating its performance against predefined metrics. These metrics may include prediction accuracy, processing latency, automation potential, or measurable impact on an existing process.

From a decision-making perspective, an AI proof of concept serves as a validation mechanism. It helps answer practical questions early in the lifecycle, such as:

- Is the available data sufficient in quality and structure?

- Can the model achieve acceptable performance levels?

- Are infrastructure, integration, or compliance constraints likely to limit viability?

- Does the expected outcome justify further investment?

It is important to distinguish an AI PoC from broader AI experimentation. A proof of concept is intentionally constrained in scope and duration, often running for a few weeks rather than months. This limitation helps reduce cost and complexity while still providing reliable signals about feasibility and risk.

In practice, organizations use AI proofs of concept to reduce uncertainty before committing to larger initiatives such as pilot programs, minimum viable products, or full-scale AI implementations. When executed correctly, an AI PoC replaces assumptions with evidence, enabling more informed decisions about whether, how, and where to proceed next.

When an AI proof of concept makes sense (and when it doesn’t)

An AI proof of concept is most effective when uncertainty exists around feasibility, data suitability, or expected outcomes. It is not a mandatory step for every AI initiative, but it becomes highly valuable when decisions involve technical complexity, financial risk, or operational impact.

Situations where an AI proof of concept is justified

An AI PoC typically makes sense when one or more of the following conditions apply:

- The use case is new or unproven

If the organization has not previously applied AI to a similar problem, a proof of concept helps determine whether the approach is realistic before committing to broader development. - Data quality or availability is uncertain

Many AI initiatives fail due to incomplete, inconsistent, or poorly structured data. A PoC allows teams to test assumptions about data readiness early, using real samples rather than theoretical models. - The outcome must meet measurable thresholds

In scenarios where accuracy, latency, or reliability directly affect business performance, a PoC helps establish whether minimum acceptable benchmarks can be reached. - Integration or compliance constraints are unclear

AI solutions often need to integrate with existing systems or comply with regulatory, security, or governance requirements. A proof of concept exposes potential blockers before they become costly issues. - Investment decisions depend on evidence

When scaling AI requires budget allocation, infrastructure planning, or organizational change, a PoC provides concrete results to support or challenge the business case.

Situations where an AI proof of concept may be unnecessary

In some cases, running a proof of concept adds limited value and can slow down progress:

- The problem is well-understood and previously solved

If similar AI solutions are already operating successfully within the organization or industry, moving directly to a pilot or MVP may be more efficient. - The use case does not require AI

When simpler rules-based automation or analytics can achieve the same outcome, an AI PoC may introduce unnecessary complexity. - The scope is already constrained and validated

If data pipelines, models, and performance expectations are clearly defined and low-risk, a proof of concept may duplicate effort rather than reduce uncertainty. - The decision has already been made

A PoC should inform a decision, not justify one retroactively. If the organization is committed to full implementation regardless of outcome, the value of a PoC diminishes.

Common misalignment to avoid

A frequent mistake is treating an AI proof of concept as a miniature production system. This often leads to overengineering, shifting goals, and unclear success criteria. A well-scoped PoC is designed to answer specific questions, not to solve every problem at once.

When aligned with the right level of uncertainty, an AI proof of concept acts as a filter, highlighting which ideas are worth scaling and which should be reconsidered before further investment.

AI proof of concept vs MVP vs pilot: understanding the differences

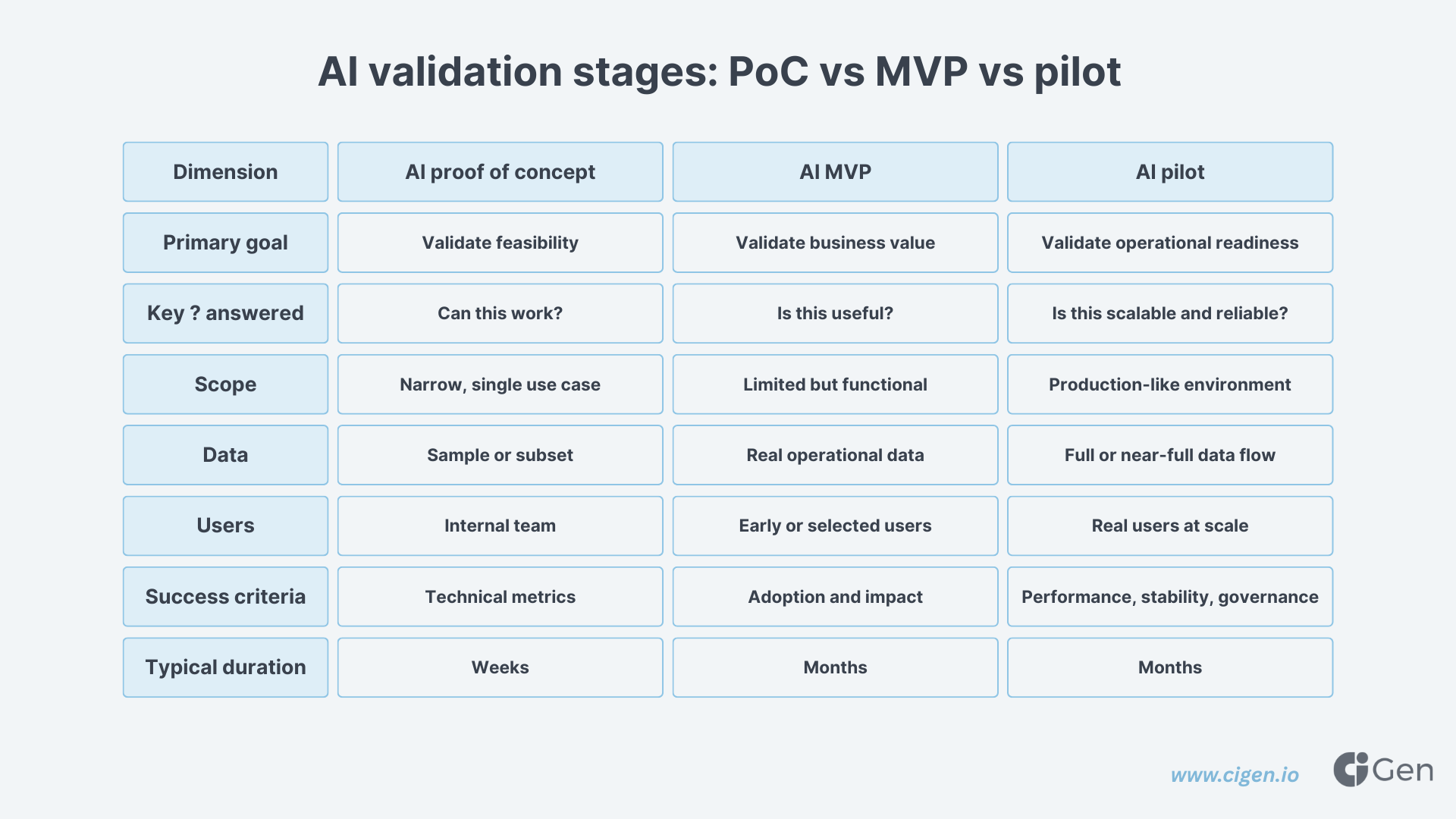

AI initiatives often stall or become misaligned because the terms proof of concept, minimum viable product, and pilot are used interchangeably. While related, each serves a distinct purpose and supports a different type of decision. Understanding these differences helps set realistic expectations and choose the right starting point.

AI proof of concept: validating feasibility

An AI proof of concept focuses on feasibility and risk reduction. Its primary goal is to determine whether an AI approach can work at all under real-world constraints.

Typical characteristics include:

- Narrow scope and limited data samples

- Simplified architecture and minimal integrations

- Short duration, often measured in weeks

- Success measured by technical and functional metrics

At this stage, the emphasis is on evidence rather than completeness. An AI PoC answers questions such as whether the data supports the intended model, whether acceptable performance levels are achievable, and whether major constraints exist.

AI MVP: validating value

A minimum viable product (MVP) moves beyond feasibility and into value validation. It is designed to test whether the AI solution delivers meaningful benefits to users or operations.

Key differences compared to a PoC:

- Broader scope with real users or workflows

- More stable architecture and basic integrations

- Limited but functional user experience

- Success measured by adoption, usability, and early impact

An MVP assumes that technical feasibility has already been established. Skipping directly to this stage without prior validation often increases rework and cost.

AI pilot: validating readiness at scale

An AI pilot evaluates whether the solution is ready for wider deployment. It typically operates in a controlled production-like environment.

A pilot usually includes:

- Production-grade data pipelines

- Security, monitoring, and governance controls

- Integration with core systems

- Performance evaluation under realistic load

At this point, the main risks are operational rather than technical. The focus shifts to reliability, scalability, and organizational readiness.

Choosing the right starting point

The correct entry stage depends on the level of uncertainty:

- High uncertainty about data or models → AI proof of concept

- Known feasibility but unclear business value → AI MVP

- Proven value but unknown operational impact → AI pilot

Starting at the wrong level often leads to either unnecessary delays or avoidable failure. A well-chosen AI proof of concept helps organizations enter the AI lifecycle with clearer expectations and fewer assumptions.

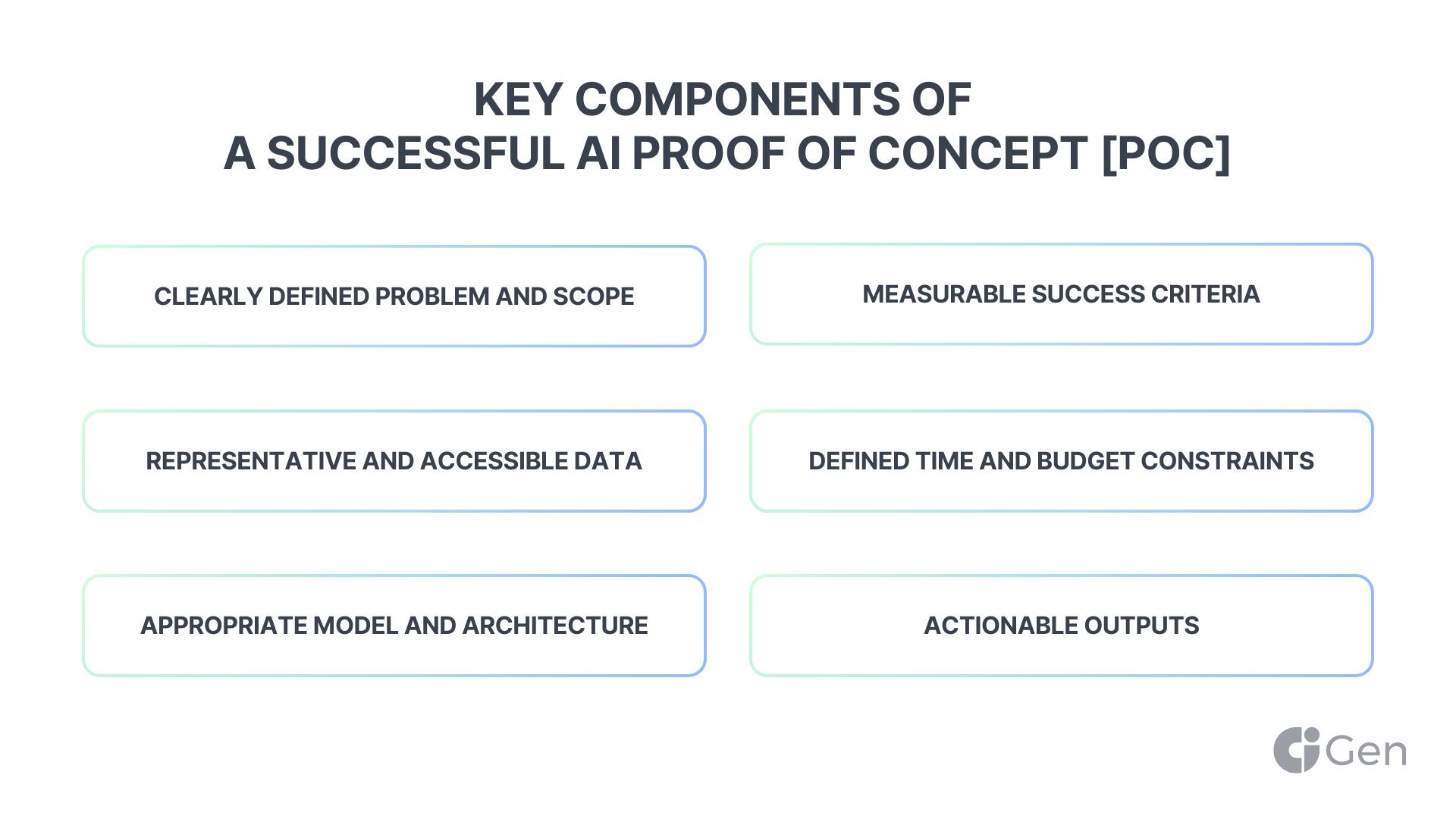

Key components of a successful AI proof of concept

A successful AI proof of concept is not defined by technical sophistication, but by clarity of purpose and disciplined execution. Its value lies in how effectively it answers specific feasibility questions while avoiding unnecessary complexity.

Clearly defined problem and scope

Every AI PoC should start with a narrowly framed problem statement. This includes defining what the model is expected to predict, classify, recommend, or automate, and under which conditions. Limiting scope is essential, as attempting to cover multiple scenarios often dilutes results and increases ambiguity.

A well-scoped PoC focuses on one primary use case, one data source or dataset type, and a small set of success criteria.

Representative and accessible data

Data selection plays a critical role in the outcome of an AI PoC. While full production datasets are not required, the data used must be representative of real-world conditions. This includes typical data volume, structure, and variability.

Early validation of data availability, quality, and labeling effort helps determine whether the use case is viable or whether significant preprocessing or data engineering work will be required later.

Appropriate model and architecture choices

At the proof-of-concept stage, simplicity is often preferable to optimization. The goal is to demonstrate feasibility, not to achieve peak performance. Using well-understood models or managed AI services can accelerate learning and reduce implementation risk.

Architecture decisions should be sufficient to support evaluation, without prematurely introducing scalability, redundancy, or advanced MLOps features.

Measurable success criteria

An AI PoC must define what success looks like before development begins. These criteria should be measurable and aligned with the intended outcome of the use case.

Examples include:

- Minimum accuracy or error thresholds

- Processing time limits

- Reduction in manual effort

- Signal quality for downstream decisions

Clear metrics prevent subjective interpretation of results and support transparent decision-making.

Time and budget constraints

Effective proofs of concept are time-boxed. Setting clear limits on duration and resources helps maintain focus and ensures that the PoC remains a validation exercise rather than an open-ended project.

This discipline also enables more accurate cost estimation for subsequent phases, such as an MVP or pilot.

Actionable outputs

The outcome of an AI proof of concept should include more than a working model. Key deliverables typically include performance results, identified limitations, data gaps, and recommendations for next steps.

These outputs form the basis for deciding whether to proceed, iterate, or stop the initiative.

Typical AI proof of concept architecture and tools

The architecture of an AI proof of concept should support rapid validation rather than long-term scalability. Its purpose is to test assumptions with minimal overhead while still reflecting the constraints of a real operating environment.

Data ingestion and preparation

Most AI PoCs begin with a limited data pipeline designed to ingest, clean, and transform a representative subset of data. This step often exposes hidden challenges, such as inconsistent formats, missing values, or unclear ownership.

At this stage, data preparation is usually lightweight and may involve:

- Extracting data from a single source or snapshot

- Basic cleaning and normalization

- Manual or semi-automated labeling where required

The goal is to understand data feasibility, not to build a fully automated pipeline.

Model development and experimentation

Model development in a proof of concept focuses on speed and clarity. Teams typically start with baseline models to establish a reference point before exploring more advanced approaches if necessary.

Common characteristics include:

- Use of standard machine learning or AI frameworks

- Limited hyperparameter tuning

- Emphasis on interpretability over optimization

This approach allows teams to quickly assess whether performance is fundamentally achievable.

Execution environment

AI proofs of concept are often developed in isolated environments to avoid disrupting existing systems. These may include local development setups, cloud-based notebooks, or temporary environments configured specifically for experimentation.

Cloud platforms are frequently used due to their flexibility, but infrastructure decisions remain intentionally minimal. Persistent environments, failover mechanisms, and advanced security controls are usually deferred to later stages.

Evaluation and monitoring

Evaluation is a core component of the AI PoC architecture. Models are tested against predefined metrics to determine whether they meet the minimum success criteria established earlier.

Basic monitoring typically includes:

- Performance metrics tracking

- Error analysis

- Simple logging for reproducibility

These insights help identify whether shortcomings are due to data limitations, model choice, or external constraints.

Documentation and knowledge capture

While often overlooked, documentation is a critical output of a proof of concept. Recording assumptions, decisions, results, and limitations ensures that learnings are transferable to future phases.

Well-documented PoCs reduce dependency on individuals and improve continuity when transitioning to MVP or pilot development.

Measuring AI proof of concept success and return on investment

The outcome of an AI proof of concept should enable a clear decision: proceed, adjust, or stop. Measuring success therefore goes beyond technical performance and includes an early view of potential business impact.

Technical performance metrics

Technical metrics provide the first validation layer. These metrics vary depending on the use case but should always be tied to the original problem definition.

Common examples include:

- Prediction accuracy, precision, recall, or error rates

- Processing time or latency

- Model stability across different data samples

- False positive or false negative rates

These indicators help determine whether the AI approach is viable under realistic conditions.

Operational impact indicators

Beyond model performance, an AI PoC should assess how results translate into operational improvement. This often requires mapping technical outputs to process-level effects.

Examples may include:

- Reduction in manual review or data entry effort

- Faster decision-making cycles

- Improved consistency or error reduction

- Better utilization of existing resources

While these impacts are often estimated at the PoC stage, they provide important context for evaluating next steps.

Cost and effort assessment

An AI proof of concept also reveals the effort required to achieve results. This includes development time, data preparation effort, infrastructure usage, and dependency on specialized skills.

Understanding these inputs helps estimate:

- Expected cost of scaling to an MVP or pilot

- Ongoing operational and maintenance requirements

- Trade-offs between in-house development and external support

These insights are often as valuable as performance metrics themselves.

Early return on investment framing

A PoC is not expected to deliver measurable ROI, but it should support early ROI estimation. This involves comparing projected benefits against estimated implementation and operational costs.

Even directional indicators, such as potential cost savings per process or efficiency gains per transaction, help prioritize initiatives and justify further investment.

Decision outcomes

A well-measured AI proof of concept leads to one of three outcomes:

- Proceed with confidence toward MVP or pilot

- Refine the approach based on identified gaps

- Stop the initiative to avoid further sunk cost

Treating all three outcomes as valid ensures that the PoC fulfills its role as a decision tool rather than a success-or-failure exercise.

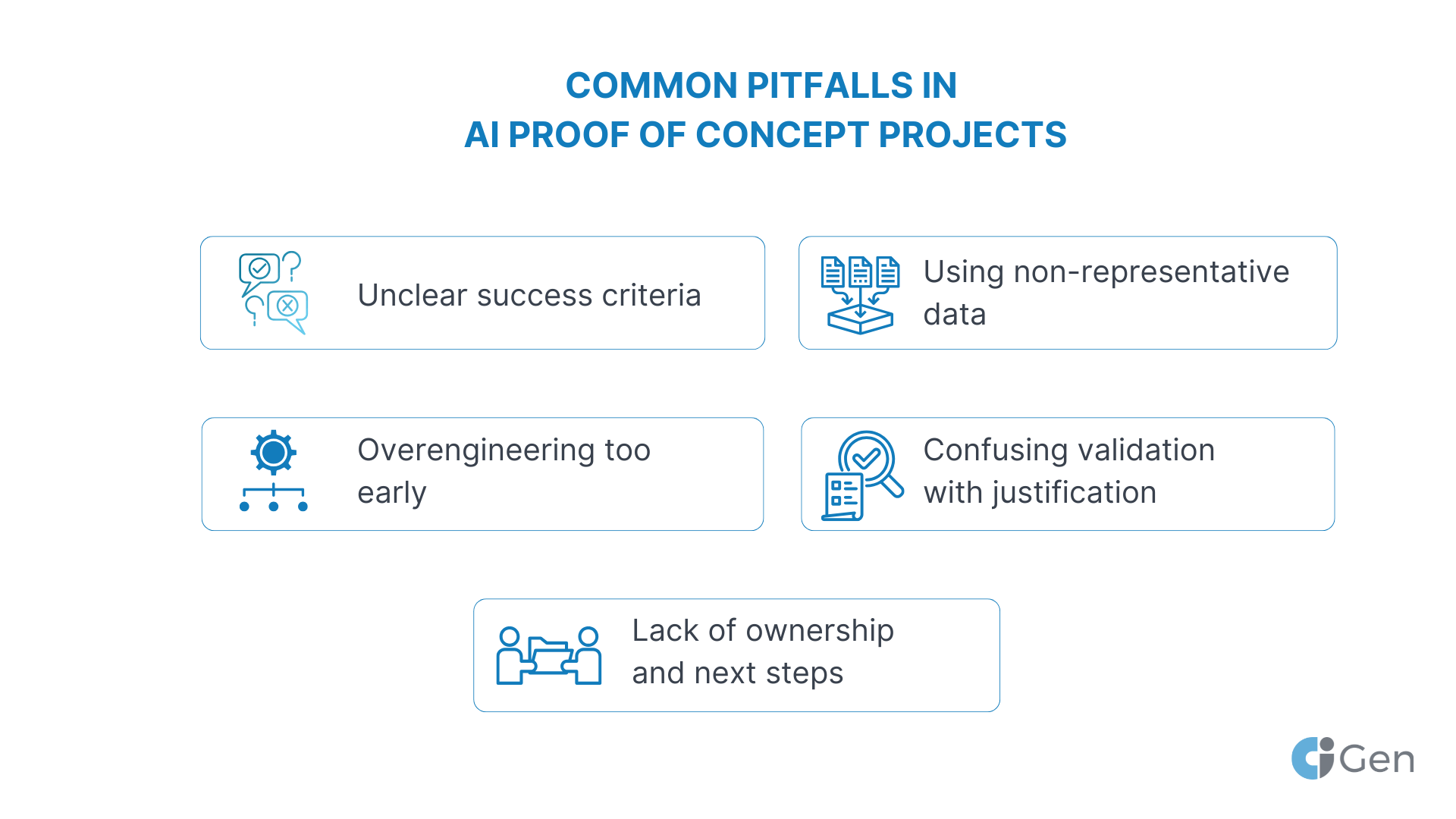

Common pitfalls in AI proof of concept projects

Despite their limited scope, AI proofs of concept often fail to deliver clarity due to avoidable missteps. Recognizing these pitfalls early improves the reliability of results and the quality of decisions that follow.

Unclear success criteria

One of the most frequent issues is starting a PoC without clearly defined success metrics. When outcomes are subjective or constantly shifting, it becomes difficult to determine whether the initiative was effective or not.

A proof of concept should answer specific questions, not generate open-ended insights.

Overengineering too early

Treating an AI PoC as a near-production system often leads to unnecessary complexity. Advanced architectures, excessive integrations, and premature optimization can inflate costs and obscure core feasibility questions.

At this stage, simplicity supports faster learning and clearer outcomes.

Using non-representative data

Testing AI models on idealized or incomplete data sets can produce misleading results. When data does not reflect real-world variability, performance metrics may appear stronger than they will be in practice.

Representative data is more valuable than perfectly curated data.

Confusing validation with justification

A PoC is meant to challenge assumptions, not confirm predetermined decisions. When the outcome is expected to be positive regardless of results, the exercise loses its value as a risk-reduction tool.

Stopping an initiative after a PoC can be a sign of success, not failure.

Lack of ownership and next steps

Without clear ownership or defined follow-up actions, PoC outcomes often remain unused. Results should be documented, reviewed, and tied to a concrete decision or roadmap.

An effective PoC closes the loop between experimentation and execution.

Conclusion: using AI proofs of concept to guide smarter investment

An AI proof of concept provides a structured way to replace assumptions with evidence before committing to broader implementation. By focusing on feasibility, data readiness, and measurable outcomes, it helps organizations evaluate AI initiatives with greater confidence and discipline.

When properly scoped and measured, an AI PoC clarifies which ideas deserve further investment and which should be reconsidered. This approach reduces technical risk, improves resource allocation, and supports more informed decision-making across the AI lifecycle.