Refactoring is one of the foundational practices for maintaining and evolving codebases as part of the software modernization process. At its simplest, refactoring refers to the disciplined process of restructuring existing source code without changing its external behavior, with the goal of improving internal design, readability, maintainability, and quality, while preserving the software’s functionality.

This practice sits at the intersection of ongoing development and larger transformation efforts. As organizations increasingly transition to cloud platforms such as Microsoft Azure, application modernization often involves both relocating workloads and refactoring them to take full advantage of cloud-native capabilities. Cloud migration is about lifting code and moving it to new infrastructure as much as it’s about preparing software for long-term scalability, resilience, and efficiency. And refactoring plays a critical role in that preparation.

The broader digital transformation trend underscores this shift. According to the 2025 State of the Cloud Report by Flexera, over half of enterprise workloads are now running in public clouds, and a majority of organizations are continuing to extend their cloud footprint. This growing adoption intensifies the need for modernization practices like refactoring, which help teams address technical debt, streamline codebases, and unlock the performance, security, and cost benefits of modern architectures.

What is refactoring?

Refactoring is the practice of improving the internal structure of existing code without changing its observable behavior. The goal is to make the codebase easier to understand, maintain, and evolve over time.

One of the most widely cited definitions comes from Martin Fowler, who describes refactoring as:

“A disciplined technique for restructuring an existing body of code, altering its internal structure without changing its external behavior.”

This definition highlights two essential characteristics that distinguish refactoring from other development activities:

- Behavior preservation – the system must continue to work exactly as before from a user and integration perspective

- Structural improvement – changes are focused on code organization, clarity, and design quality

In practice, refactoring addresses issues such as unnecessary complexity, duplicated logic, unclear naming, tightly coupled components, or outdated design patterns. These issues often accumulate gradually as software evolves, especially in long-lived systems or products developed by multiple teams over time.

Refactoring as a continuous practice

Refactoring is rarely a one-off activity. In modern software engineering, it is treated as a continuous practice, embedded into everyday development rather than scheduled as a separate “cleanup phase.” Teams refactor code when:

- extending existing functionality

- preparing the codebase for new architectural changes

- addressing maintainability or performance concerns

- reducing technical debt that slows down development

This approach is common in agile and DevOps environments, where small, incremental improvements are preferred over large, risky rewrites.

What refactoring is not

To avoid confusion, it is important to clearly separate refactoring from related but different activities:

- Refactoring is not rewriting – the system is not rebuilt from scratch

- Refactoring is not feature development – no new capabilities are added

- Refactoring is not bug fixing – functional behavior should remain unchanged

These distinctions matter, especially in planning and estimation, as refactoring is primarily an engineering quality activity, not a product feature.

Why refactoring is necessary

Refactoring is primarily driven by the need to control complexity and maintain long-term code quality as software systems evolve. Even well-designed applications tend to degrade over time as new features are added, requirements change, and multiple contributors work on the same codebase. Without deliberate effort to improve internal structure, this gradual decline can significantly affect development speed, reliability, and cost.

Accumulation of technical debt

One of the most common reasons for refactoring is the accumulation of technical debt. Technical debt represents design or implementation choices that may be expedient in the short term but increase the cost of future changes. Examples include duplicated logic, overly complex methods, or tightly coupled components.

As technical debt grows, teams often experience:

- longer development and testing cycles

- increased risk of introducing defects

- difficulty onboarding new developers

- reduced confidence when modifying existing code

Refactoring helps address technical debt incrementally, making the codebase more predictable and easier to work with.

Code smells as early warning signals

The need for refactoring is often identified through code smells, symptoms in the code that indicate deeper structural issues. Code smells are not bugs, but they suggest that the design may be fragile or hard to maintain.

Common examples include:

- long or overly complex methods

- duplicated code across modules or services

- unclear or misleading naming

- large classes handling multiple responsibilities

- excessive conditional logic

Addressing these issues through refactoring improves clarity and reduces the likelihood of future defects.

Below you'll find a table of the most common code smells (based primarily on the taxonomy introduced by Martin Fowler in Refactoring: Improving the Design of Existing Code, with some additional modern engineering practices considered).

Impact on maintainability and change velocity

As applications mature, the majority of engineering effort typically shifts from building new systems to maintaining and extending existing ones. Poorly structured code increases the effort required for even minor changes, turning routine enhancements into high-risk tasks.

Refactoring supports:

- faster implementation of new features

- safer changes due to clearer structure and intent

- improved testability and reliability

- easier adaptation to new platforms, frameworks, or architectures

This is particularly relevant in environments where systems are being prepared for modernization, cloud migration, or integration with new services.

Refactoring as a risk-reduction mechanism

Rather than being purely an optimization activity, refactoring functions as a risk management practice. By continuously improving code structure, teams reduce the likelihood of large-scale failures, unplanned rewrites, or costly emergency fixes later in the lifecycle.

In this sense, refactoring is less about perfection and more about keeping systems adaptable as business, technology, and operational requirements change.

Refactoring vs rewriting vs re-architecting

As software systems evolve, teams often face a strategic decision: should the existing code be refactored, rewritten, or fundamentally re-architected? These approaches are frequently confused, yet they differ significantly in scope, risk, cost, and intent. Understanding the distinction is critical for making informed technical and business decisions.

Refactoring

Refactoring focuses on improving the internal structure of existing code while preserving its external behavior. The system continues to work as before, but the code becomes easier to read, maintain, test, and extend.

Typical characteristics:

- Incremental and continuous

- Low to moderate risk when supported by tests

- No change in system functionality

- Usually performed alongside feature development or maintenance

Refactoring is most effective when the current architecture is fundamentally sound, but the implementation quality has degraded over time.

Rewriting

Rewriting involves replacing an existing codebase with a new implementation, often using the same functional requirements as a reference. While the goal may be cleaner code or modern technology, rewriting discards most or all of the existing implementation.

Typical characteristics:

- Large upfront effort

- High risk of delays and scope creep

- Functional regressions are common

- Requires parallel maintenance of old and new systems

Rewrites are often justified when the existing codebase is no longer maintainable or when accumulated design constraints make incremental improvement impractical. However, industry experience consistently shows that rewrites tend to take longerand cost more than initially planned.

Re-architecting

Re-architecting goes beyond code-level changes and addresses fundamental structural decisions such as system boundaries, deployment models, data flow, or scalability patterns. This may include moving from a monolithic architecture to microservices, introducing event-driven communication, or adopting cloud-native design principles.

Typical characteristics:

- Strategic, system-level scope

- May include refactoring or rewriting as sub-activities

- Higher impact on operations, teams, and tooling

- Often aligned with modernization or cloud migration initiatives

Re-architecting is typically driven by non-functional requirements such as scalability, resilience, performance, or regulatory compliance rather than code quality alone.

Choosing the right approach

The choice between refactoring, rewriting, and re-architecting depends on several factors:

- Code quality vs architectural fit. Is the problem primarily internal code structure or system design?

- Business risk tolerance. Can the organization absorb long delivery cycles or functional regressions?

- Rate of change. How frequently does the system need to evolve?

- Modernization goals. Are there platform or infrastructure shifts involved?

In many real-world scenarios, refactoring is used as a foundation, enabling gradual architectural change without the disruption of a full rewrite. This incremental approach is often preferred when systems must remain operational while evolving.

Common refactoring techniques and patterns

Refactoring is typically performed through a series of small, well-defined code transformations rather than large structural changes. These transformations are often referred to as refactoring techniques or patterns. They are designed to improve readability, reduce duplication, and simplify logic while keeping behavior unchanged.

Below are some popular refactoring techniques:

Improving readability and intent

Many refactorings focus on making code easier to understand. Clear intent reduces cognitive load and lowers the risk of errors during future changes.

Typical techniques include:

- Rename variable, method, or class – replaces ambiguous or misleading names with ones that clearly express purpose

- Extract method – breaks long or complex methods into smaller, self-contained functions

- Inline temporary variable – removes unnecessary intermediate variables that obscure logic

These changes do not alter behavior but significantly improve maintainability, especially in shared or long-lived codebases.

Reducing duplication

Code duplication increases maintenance effort and the likelihood of inconsistent behavior. Refactoring aims to consolidateshared logic into a single place.

Common approaches:

- Extract method or function – reuse logic across multiple call sites

- Extract class or module – group related responsibilities together

- Pull up method / push down method – move shared behavior to an appropriate level in an inheritance hierarchy

Reducing duplication makes future changes safer, as logic only needs to be updated once.

Simplifying conditional logic

Complex conditional logic is a frequent source of bugs and misunderstandings. Refactoring can make such logic more explicit and easier to reason about.

Techniques often used:

- Decompose conditional – split complex conditions into descriptive methods

- Replace conditional with polymorphism – move conditional behavior into specialized classes

- Replace magic numbers with named constants – improve clarity and intent

These refactorings improve testability and reduce the risk of unintended side effects.

Improving class and module design

Over time, classes and modules may grow beyond their original responsibilities. Refactoring helps realign code with sound design principles.

Typical techniques:

- Extract class – separate responsibilities that do not belong together

- Move method or field – place behavior closer to the data it operates on

- Encapsulate field – control access to internal state

These changes support better separation of concerns and more flexible system evolution.

Refactoring with safety in mind

Most authoritative sources emphasize that refactoring should be performed incrementally and safely, ideally supported by automated tests. Small steps make it easier to validate that behavior remains unchanged and to revert changes if needed.

Common best practices include:

- refactoring one concern at a time

- running tests after each change

- avoiding simultaneous refactoring and feature additions

- using version control to track and review changes

Refactoring techniques are intentionally simple, but their cumulative effect can be substantial. Applied consistently, they help keep systems adaptable and reduce the need for disruptive rewrites or large-scale rework later.

Refactoring in Agile, DevOps, and CI/CD environments

In modern software delivery, refactoring is most effective when treated as a routine engineering activity, not a standalone initiative. Agile methodologies, DevOps practices, and CI/CD pipelines provide the structural conditions that make continuous refactoring both practical and safe.

Refactoring in agile development

Agile development emphasizes incremental delivery, frequent feedback, and adaptability to change. Within this context, refactoring is typically embedded into everyday work rather than planned as a separate phase.

Common agile-aligned refactoring practices include:

- refactoring code before extending existing functionality

- improving design opportunistically when touching related areas of the code

- addressing technical debt as part of sprint work rather than deferring it indefinitely

This approach helps teams avoid large refactoring backlogs and keeps codebases aligned with evolving requirements. Refactoring becomes part of maintaining a sustainable development pace, rather than a corrective action taken only when problems become severe.

Role of refactoring in DevOps practices

DevOps focuses on reducing friction between development and operations while increasing deployment frequency and system reliability. Poorly structured code directly undermines these goals by making changes riskier and harder to automate.

In DevOps-oriented teams, refactoring supports:

- more predictable builds and deployments

- improved observability and error diagnosis

- clearer ownership of services and components

- easier automation of operational tasks

As systems become more distributed, refactoring also helps ensure that service boundaries, configuration management, and deployment logic remain understandable and manageable.

Refactoring and CI/CD pipelines

Continuous integration and continuous delivery pipelines are a key enabler of safe refactoring. Automated builds, tests, and deployments provide fast feedback that confirms behavior has not changed after internal code modifications.

Effective refactoring within CI/CD environments typically relies on:

- comprehensive automated test coverage

- frequent commits with small, focused changes

- code reviews that explicitly consider structural quality

- automated quality checks such as static analysis and linters

These mechanisms reduce the risk traditionally associated with refactoring and make it feasible to apply improvements continuously rather than in large batches.

Preparing systems for modernization and cloud adoption

In many organizations, refactoring is closely tied to application modernization and cloud adoption initiatives. For example, when migrating workloads to platforms such as Microsoft Azure, teams often refactor applications to improve modularity, remove hard-coded infrastructure assumptions, or align with managed services and cloud-native patterns.

In this context, refactoring acts as an enabler, allowing systems to evolve gradually while remaining operational. Rather than delaying modernization until a full rewrite is possible, teams use refactoring to reduce risk and complexity step by step.

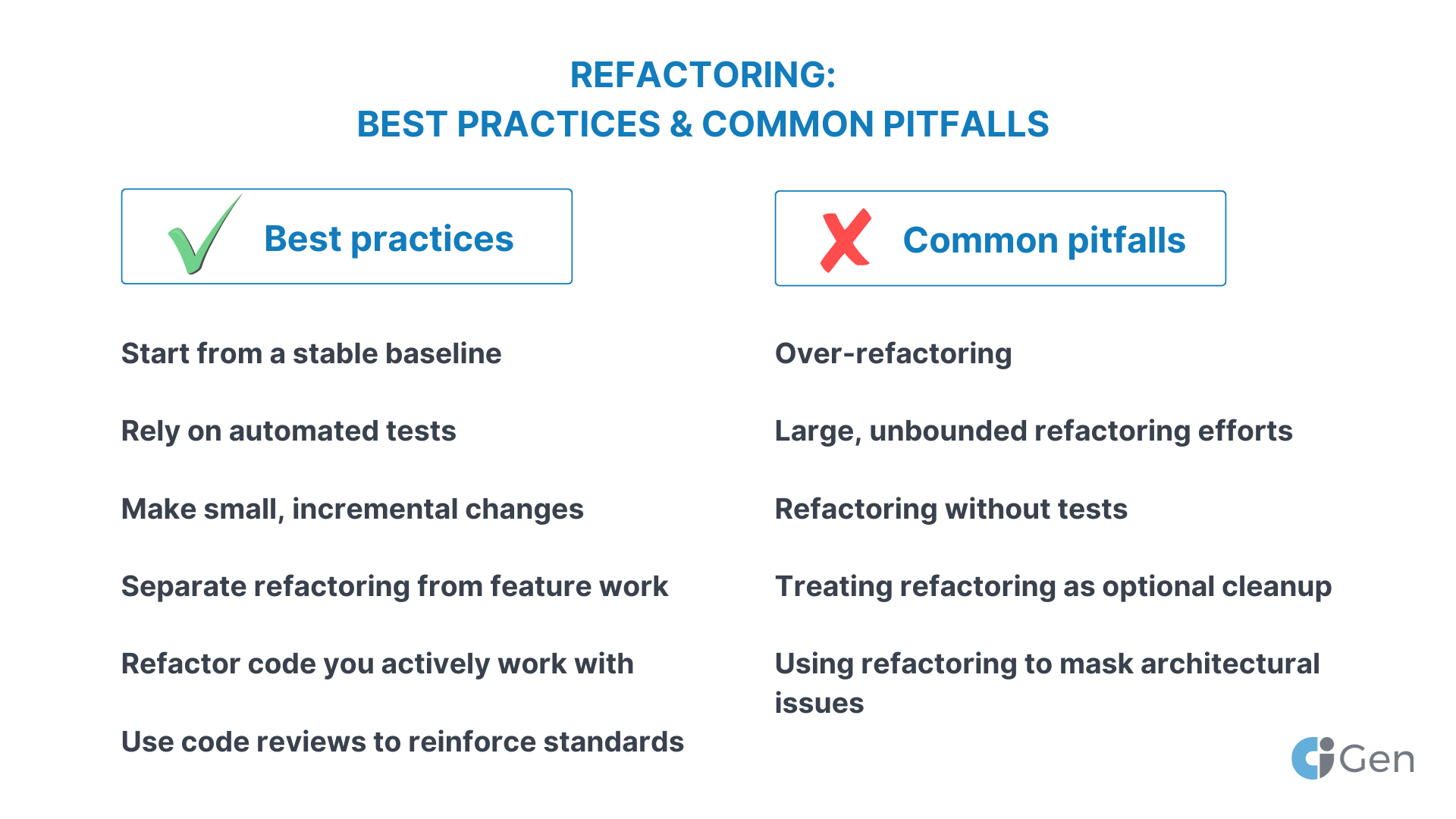

Refactoring best practices and common pitfalls

While refactoring is conceptually straightforward, its effectiveness depends heavily on how it is applied. Teams that treat refactoring as an undisciplined cleanup exercise often introduce risk, while teams that follow clear practices use it to steadily improve code quality with minimal disruption.

Best practices for effective refactoring

Start from a stable baseline

Refactoring should begin from code that is functionally correct. If defects exist, they should be isolated and fixed separately. This makes it easier to confirm that refactoring has not altered behavior.

Rely on automated tests

Automated tests are the primary safety net for refactoring. Unit tests, integration tests, and regression tests provide confidence that behavior remains unchanged after internal modifications. In practice, the absence of tests significantly limits how safely refactoring can be performed.

Make small, incremental changes

Effective refactoring consists of many small steps rather than large, sweeping changes. Small refactorings are:

- easier to review

- simpler to validate

- less risky to roll back

This incremental approach aligns well with version control and CI workflows.

Separate refactoring from feature work

Mixing refactoring with new functionality makes it harder to reason about changes and increases review complexity. A clear separation helps reviewers and stakeholders understand the intent of each change set.

Refactor code you actively work with

Refactoring delivers the most value when applied to code that is being extended or modified. This avoids unnecessary changes to stable areas and ensures effort is focused where it improves productivity.

Use code reviews to reinforce standards

Code reviews are an effective mechanism for maintaining structural quality. Reviewing refactoring changes helps spread shared understanding of design principles and prevents regression into poor patterns.

Common pitfalls to avoid during refactoring process

Over-refactoring

Not every imperfection requires immediate attention. Refactoring should be guided by maintainability, risk, and future change expectations. Excessive refactoring can consume time without delivering proportional value.

Large, unbounded refactoring efforts

Refactoring initiatives without clear scope or stopping criteria often expand indefinitely. This increases delivery risk and can delay business outcomes. Clear goals and boundaries help keep refactoring aligned with priorities.

Refactoring without tests

Refactoring in the absence of tests significantly increases the risk of unintended behavior changes. In such cases, teams often compensate with manual testing, which is slower and less reliable.

Using refactoring to mask architectural issues

Refactoring improves internal code structure but does not solve fundamental architectural mismatches. Attempting to refactor around structural limitations can delay necessary architectural decisions.

Treating refactoring as optional cleanup

When refactoring is consistently deprioritized, technical debt accumulates until it becomes a blocker. Sustainable teams treat refactoring as part of normal engineering work, not an optional extra.

Refactoring is most effective when it is intentional, incremental, and supported by engineering discipline. Applied consistently, these practices help teams reduce long-term risk, maintain delivery speed, and prepare systems for future evolution.

Refactoring in cloud migration and application modernization

Refactoring plays a central role in application modernization, particularly when organizations move workloads from on-premises environments to the cloud. While some applications can be migrated with minimal changes, many systems require refactoring to fully benefit from modern platforms and operating models.

Refactoring as part of migration strategies

In cloud adoption frameworks, refactoring is typically positioned between simple relocation and full redesign. Rather than moving applications unchanged or rebuilding them entirely, teams selectively refactor parts of the codebase to remove constraints that limit scalability, resilience, or operational efficiency.

Within the Microsoft Azure ecosystem, this approach aligns with commonly referenced migration paths where applications are adapted to:

- decouple business logic from infrastructure

- externalize configuration and secrets

- replace self-managed components with managed cloud services

This allows organizations to modernize incrementally while keeping systems operational throughout the transition.

Typical refactoring drivers during modernization

When preparing applications for cloud environments, refactoring is often triggered by legacy assumptions embedded in the codebase, such as:

- tight coupling to on-premises infrastructure

- reliance on local file systems or static configuration files

- synchronous, tightly coupled communication patterns

- monolithic designs that limit independent scaling

Refactoring addresses these constraints by improving modularity, clarifying responsibilities, and introducing abstraction layers that make the system more adaptable to cloud-native patterns.

Enabling cloud-native capabilities

Refactoring is often a prerequisite for adopting cloud-native services and architectures. For example, teams may refactor applications to:

- split large codebases into more manageable components

- prepare services for independent deployment and scaling

- improve observability through clearer boundaries and logging

- align with platform services such as managed databases, messaging, or identity providers

These changes are typically internal and behavior-preserving, but they unlock operational and scalability benefits that would otherwise remain inaccessible.

Risk management during migration

From a risk perspective, refactoring supports controlled modernization. Instead of introducing large-scale changes all at once, teams can refactor incrementally, validate behavior through automated testing, and deploy changes progressively. This reduces the likelihood of extended outages or regressions during migration.

In many real-world scenarios, refactoring is a сontinuous activity that accompaniesmodernization over time. Applications evolve step by step, allowing organizations to balance delivery pressure with long-term sustainability.

Refactoring as a long-term modernization investment

While refactoring does not immediately change functionality, its impact becomes visible over time. Cleaner internal structure enables faster iteration, easier integration with new services, and smoother adoption of future architectural changes. In cloud environments where systems are expected to evolve continuously, refactoring acts as a foundation rather than an optional enhancement.

Measuring the business value of refactoring

One of the most common challenges with refactoring is that its value is indirect. Unlike new features, refactoring does not immediately change user-facing functionality, which can make it harder to justify in business terms. However, its impact can be measured through a combination of engineering, delivery, and operational indicators.

Productivity and delivery metrics

Refactoring often translates into measurable improvements in how quickly and safely teams can deliver changes. Common indicators include:

- Lead time for changes – how long it takes to move from code commit to production

- Deployment frequency – how often teams can release updates without disruption

- Change failure rate – how often deployments result in incidents or rollbacks

As code structure improves, teams typically experience shorter development cycles and fewer regressions, even though refactoring itself does not add features.

Maintainability and cost indicators

From a cost perspective, refactoring helps reduce the ongoing effort required to maintain and extend systems. Signals that refactoring is delivering value include:

- reduced time spent understanding existing code

- lower effort estimates for similar types of changes over time

- fewer production defects linked to legacy or fragile components

- improved onboarding speed for new engineers

These indicators are especially relevant for long-lived systems where maintenance represents the majority of engineering spend.

Quality and risk reduction metrics

Refactoring also contributes to risk reduction, which can be evaluated through quality-related measures such as:

- declining defect density in modified areas

- improved test coverage and test reliability

- reduced severity and frequency of production incidents

- clearer ownership and accountability for components or services

Although these outcomes are not always immediately visible, they tend to compound over time as the codebase stabilizes.

Refactoring and technical debt tracking

Some organizations explicitly track technical debt using static analysis tools or internal scoring models. While these measurements are imperfect, trends over time can be useful when combined with delivery and quality metrics.

Effective refactoring initiatives typically show:

- stabilization or reduction of technical debt indicators

- fewer high-risk hotspots in frequently changed code

- improved consistency with agreed design and coding standards

The emphasis should be on directional improvement, not absolute scores.

Aligning refactoring with business goals

To make refactoring visible at a business level, teams often link it to concrete objectives such as:

- faster time-to-market for new features

- improved system reliability and uptime

- lower operational and support costs

- readiness for modernization, integration, or scaling initiatives

When framed this way, refactoring is a supporting investment that enables delivery, stability, and long-term adaptability.

Refactoring as a long-term engineering discipline

Refactoring is a foundational practice in modern software engineering, focused on improving internal code structure without changing external behavior. While it does not deliver new features directly, it plays a critical role in keeping systems maintainable, adaptable, and safe to evolve over time.

As applications grow and change, complexity and technical debt tend to accumulate naturally. Refactoring provides a structured way to manage this complexity incrementally, allowing teams to improve code quality while continuing to deliver value. When embedded into everyday development, it helps prevent the gradual degradation that often leads to costly rewrites or disruptive modernization efforts.

In contemporary delivery models, particularly agile, DevOps, and cloud-based environments, refactoring is best understood as an enabling activity. It supports faster development cycles, reduces operational risk, and prepares systems for architectural change, including cloud migration and application modernization on platforms such as Microsoft Azure.

From a business perspective, the value of refactoring becomes visible through improved delivery speed, reduced defect rates, lower maintenance costs, and greater confidence in making changes. These outcomes are especially important for long-lived systems that must evolve continuously in response to changing market and technical conditions.

Ultimately, refactoring is about maintaining engineering optionality, ensuring that software remains understandable, adaptable, and economically viable throughout its lifecycle. Treated as a disciplined, ongoing practice, refactoring helps organizations balance short-term delivery with long-term sustainability.